The components of a machine learning solution

- Data Generation: Every machine learning application lives off data. That data has to come from somewhere.

- Data Collection: Data is only useful if it’s accessible, so it needs to be stored – ideally in a consistent structure and conveniently in one place.

- Feature Engineering Pipeline: We have to select, transform, combine, and otherwise prepare our data so the algorithm can find useful patterns.

- Training: We apply algorithms, and they learn patterns from the data. Then they use these patterns to perform particular tasks.

- Evaluation: We need to carefully test how well our algorithm performs on data it hasn’t seen before (during training).

- Task Orchestration: Feature engineering, training, and prediction all need to be scheduled on our compute infrastructure (such as AWS or Azure) – usually with non-trivial interdependence.

- Prediction: We use the model we’ve trained to perform new tasks and solve new problems.

- Infrastructure: Even in the age of the cloud, the solution has to live and be served somewhere. This will require setup and maintenance.

- Authentication: This keeps our models secure and makes sure only those who have permission can use them.

- Interaction: We need some way to interact with our model and give it problems to solve. Usually this takes the form of an API.

- Monitoring: We need to regularly check our model’s performance. This usually involves periodically generating a report or showing performance history in a dashboard.

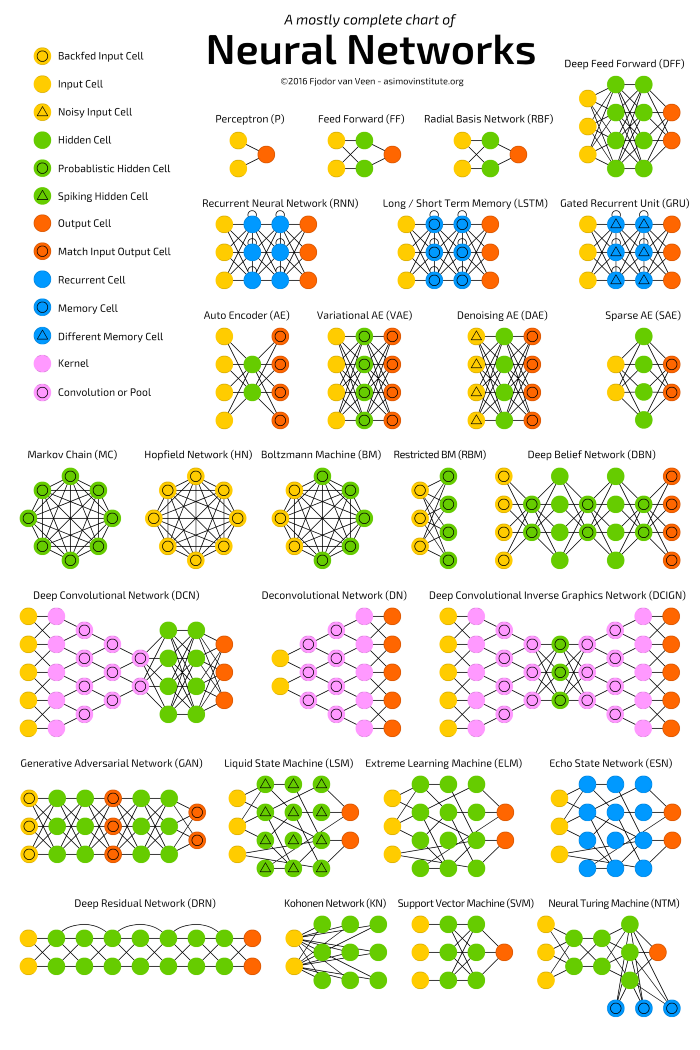

Neural Networks

A CNN is a neural network: an algorithm used to recognize patterns in data. Neural Networks in general are composed of a collection of neurons that are organized in layers, each with their own learnable weights and biases. Let’s break down a CNN into its basic building blocks.

- A tensor can be thought of as an n-dimensional matrix. In the CNN above, tensors will be 3-dimensional with the exception of the output layer.

- A neuron can be thought of as a function that takes in multiple inputs and yields a single output. The outputs of neurons are represented above as the red → blue activation maps.

- A layer is simply a collection of neurons with the same operation, including the same hyperparameters.

- Kernel weights and biases, while unique to each neuron, are tuned during the training phase, and allow the classifier to adapt to the problem and dataset provided. They are encoded in the visualization with a yellow → green diverging colorscale. The specific values can be viewed in the Interactive Formula View by clicking a neuron or by hovering over the kernel/bias in the Convolutional Elastic Explanation View.

- A CNN conveys a differentiable score function, which is represented as class scores in the visualization on the output layer.

Convolutional Neural Network

convolution operations to extract features from images. This is a key feature of convolutional layers, called parameter sharing, where the same weights are used to process different parts of the input image. This allows us to detect feature patterns that are translation invariant as the kernel moves across the image. This approach improves the model efficiency by significantly reducing the total number of trainable parameters compared to fully connected layers.

SoftMax. This layer applies the softmax function to the outputs from the last fully connected layer in the network

References

- Understanding Convolutional Neural Network: A Complete Guide (learnopencv.com)

- CNN Explainer (poloclub.github.io)